You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Release Asuswrt-Merlin 3006.102.7 is now available

- Thread starter RMerlin

- Start date

You don't know what you're missing.. so much fun...Same here, but no nodes

Clark Griswald

Very Senior Member

Nodes are not always "fun", and sometimes they are scary.You don't know what you're missing.. so much fun...

Casa Griswald only uses a Main and an AP. No network issues with latest RMerlin FW.

128bit

Senior Member

the only weird behavior i've noticed since my 102.7 update was with the usb 3.0 health scanner panel. when doing a rescan of my nas attached usb.30 ssd, at ~50% i get logged out! after logging back in, the scan shows completion. this behavior is new with 102.7 as that 860 evo ssd has been solid on several asus devices over the years.Thank you as always!

If anyone installs this on their (ROG) GT-AX11000 Pro, would appreciate andy follow ups/comments. Both the August and November releases displayed quirks, was awating this to see how it goes. Good weekend to all, S.

Check the system log. The most likely reason I can think of would be your router runs out of memory while doing the scan, causing service crashes. In the past running a fs check on a large disk has always been a problem due to the amount of RAM required for it.the only weird behavior i've noticed since my 102.7 update was with the usb 3.0 health scanner panel. when doing a rescan of my nas attached usb.30 ssd, at ~50% i get logged out! after logging back in, the scan shows completion. this behavior is new with 102.7 as that 860 evo ssd has been solid on several asus devices over the years.

128bit

Senior Member

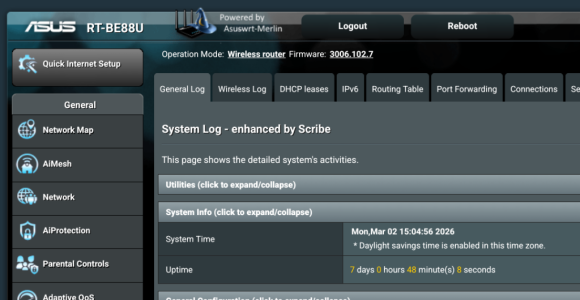

not seeing anything specific re ram/memory in the syslog.Check the system log. The most likely reason I can think of would be your router runs out of memory while doing the scan, causing service crashes. In the past running a fs check on a large disk has always been a problem due to the amount of RAM required for it.

>>> >> >

Mar 2 11:14:39 disk_monitor: re-mount partition

Mar 2 11:14:39 kernel: tntfs info (device sda1, pid 18165): ntfs_fill_super(): fail_safe is enabled.

Mar 2 11:14:39 kernel: tntfs info (device sda1, pid 18165): load_system_files(): NTFS volume name 'gpdNAS-1t', version 3.1 (cluster_size 4096, PAGE_SIZE 4096).

Mar 2 11:14:39 disk_monitor: USB ntfs fs at /dev/sda1 mounted on /tmp/mnt/ssdNAS-1t

Mar 2 11:14:39 usb: USB ntfs fs at /dev/sda1 mounted on /tmp/mnt/gpdNAS-1t.

Mar 2 11:14:39 rc_service: disk_monitor 4555:notify_rc stop_httpd

Mar 2 11:14:39 rc_service: disk_monitor 4555:notify_rc start_httpd

Mar 2 11:14:39 rc_service: waitting "stop_httpd"(last_rc:stop_httpd) via disk_monitor ...

Mar 2 11:14:40 rc_service: disk_monitor 4555:notify_rc start_samba

Mar 2 11:14:40 rc_service: waitting "start_httpd"(last_rc:start_httpd) via disk_monitor ...

< << <<<

on the system status panel, ram typically shows ~47% but i'm not sure how that may change during the scan. shortly after the remount msg is when i get logged out. this is just a snippet but no other messages re ram/memory show on a fresh log.

dave14305

Part of the Furniture

I suppose it’s this part of disk_monitor that stops the httpd daemon. Perhaps this isn’t meant to be there:Mar 2 11:14:39 rc_service: disk_monitor 4555:notify_rc stop_httpd

Mar 2 11:14:39 rc_service: disk_monitor 4555:notify_rc start_httpd

Mar 2 11:14:39 rc_service: waitting "stop_httpd"(last_rc:stop_httpd) via disk_monitor ...

Mar 2 11:14:40 rc_service: disk_monitor 4555:notify_rc start_samba

Mar 2 11:14:40 rc_service: waitting "start_httpd"(last_rc:start_httpd) via disk_monitor ...

asuswrt-merlin.ng/release/src/router/rc/usb.c at 58b0b7acd100117014970d01741952939cd79ad8 · RMerl/asuswrt-merlin.ng

Third party firmware for Asus routers (newer codebase) - RMerl/asuswrt-merlin.ng

The real question is why Asus is setting DEBUG_RCTEST in the Makefile, which I would expect should only be enabled for test purposes. I just checked the GPL code itself and it's there.I suppose it’s this part of disk_monitor that stops the httpd daemon. Perhaps this isn’t meant to be there:

asuswrt-merlin.ng/release/src/router/rc/usb.c at 58b0b7acd100117014970d01741952939cd79ad8 · RMerl/asuswrt-merlin.ng

Third party firmware for Asus routers (newer codebase) - RMerl/asuswrt-merlin.nggithub.com

I also see this (unrelated) typo next to a DEBUG_RCTEST section I just reviewed:

Code:

#ifdef HND_ROTUERWell...

That DEBUG_RCTEST constant has been present since at least 2017 according to git blame (the initial GPL merge when the repo was started), so at this point I'd rather not touch it. This enables the "rc" applet, which may potentially be used by other components of the firmware.

That code block in usb.c is also present in the GPL, so it's not a merge issue on my part.

That code block in usb.c is also present in the GPL, so it's not a merge issue on my part.

dave14305

Part of the Furniture

I think it’s French.I also see this (unrelated) typo next to a DEBUG_RCTEST section I just reviewed:

128bit

Senior Member

hey guys, been working this and another connect problem but fwiw, "claude" called out the same possibility with ram as u did but after seeing the log, though it may be a bug. i realize "ai" can be sensitive on this board but i assure you, i'm just trying to learn - below the text from a claude session. the log it had may have been larger.The real question is why Asus is setting DEBUG_RCTEST in the Makefile, which I would expect should only be enabled for test purposes. I just checked the GPL code itself and it's there.

I also see this (unrelated) typo next to a DEBUG_RCTEST section I just reviewed:

Code:#ifdef HND_ROTUER

Well...

>>>

This log tells a very clear story. Let me walk through it precisely.

What actually happened:

At 11:14:29, the disk rescan triggered and immediately began tearing down services — Samba, FTP, avahi, wsdd2, iTunes daemon. At 11:14:39, after remounting the NTFS partition, disk_monitor called stop_httpd then start_httpd, which restarted dnsmasq entirely — both instances (PID 4456 and 4451 get SIGTERM at 11:14:41, replaced by 18237 and 18242).

That dnsmasq restart is your problem. The ESP's DHCP negotiation was in progress during the 11:14:28–11:14:41 window. The old dnsmasq was killed mid-transaction. The new dnsmasq came up, saw the Discovers, sent Offers — but the ESP was in a confused state from the previous incomplete exchange and the timing kept missing. It didn't complete a Request/Ack until well after the dust settled.

This is also your UI logout. httpd was stopped and restarted as part of the same chain reaction. That's not a memory issue — that's disk_monitor explicitly calling stop_httpd/start_httpd as part of its rescan sequence. Merlin's suspicion about memory may be a red herring; the logout is by design (poorly), not by crash.

The core issue: ASUS's disk_monitor rescan sequence is unnecessarily nuclear — it tears down and restarts httpd and dnsmasq for what should be a filesystem-level operation. That's a Merlin/ASUS bug worth filing properly on SNBForums with this exact log.

What Copilot likely missed: it probably focused on the dnsmasq restart in isolation without connecting it back to disk_monitor being the trigger, and without recognizing that the httpd restart and the DHCP failure are both children of the same parent event — not two separate problems.

<<< << <

that was claude's 2 cents. so don't kill the messenger. the reference to copilot comes from a similar session with that ai. copilot and gemini are typically 10-20% accurate but i often start there.

dave14305

Part of the Furniture

I would try to recreate this problem on 102.6. Since this code has been there a while, maybe somehow the disabling of AiCloud and other USB adjacent features causes something not to happen. It’s only a wild guess, but Claude tends to agree with me.hey guys, been working this and another connect problem but fwiw, "claude" called out the same possibility with ram as u did but after seeing the log, though it may be a bug. i realize "ai" can be sensitive on this board but i assure you, i'm just trying to learn - below the text from a claude session. the log it had may have been larger.

dave14305

Part of the Furniture

I think it’s suspicious that this DEBUG_RCTEST in usb.c immediately follows a RTCONFIG_CLOUDSYNC section. I wonder if it’s meant to be within it.If Asus decided to put an httpd restart there it might be for a reason, so I'm not really keen on changing that behaviour.

The whole function will mount a partition, so I can see restarting httpd after mounting the volume to ensure that httpd is aware of the system level change.I think it’s suspicious that this DEBUG_RCTEST in usb.c immediately follows a RTCONFIG_CLOUDSYNC section. I wonder if it’s meant to be within it.

That code was added in GPL 3006_102_34369.

Without knowing the intention behind it it's hard to tell if it's legitimate or not. The fact that it lies within a debug-related define that has been permanently set for many years makes it even murkier. I would probably have to ask them to know for sure, assuming anyone currently on the team would remember why it's there.

junior120872

Regular Contributor

I've always noticed funny things with networkmap losing it's brains a little bit. I usually have around 124 devices connected to my mesh on average. In the evenings it's more in the mornings it's less. If I notice any discrepancies in those numbers I'll just run a 'killall -HUP networkmap' and it fixes it. I'm not running ipv6 though. I saw some fixed issues around ipv6 earlier in the thread with restarting some services via cron. You may want to look at those.AXE-16000. Have reverted back to 3006.102.6. Losing devices, both wired and wireless. Reboot brings them back and then they disappear around two hours later. Might have something to do with IPV6. Don't see anything in the logs that pops out at me.

128bit

Senior Member

i can assure you that with every new release, i dismount then physically remove the usb 3 ssd. once the new fw is loaded and the mesh node operational, that process is reversed. been doin that for years and never experienced this behavior. going back to 102.6 would be painful as it never failed on that fw.I would try to recreate this problem on 102.6. Since this code has been there a while, maybe somehow the disabling of AiCloud and other USB adjacent features causes something not to happen. It’s only a wild guess, but Claude tends to agree with me.

CaptnDanLKW

Senior Member

Same here. When things start to look odd, I also notice the device counts on the AI Mesh page do not add up to the number on the main page. Also the AI Mesh counts seem to be frozen, and the station binding/unbinding doesn't work. (or it does but the AI Mesh interface doesn't reflect it).I've always noticed funny things with networkmap losing it's brains a little bit. I usually have around 124 devices connected to my mesh on average. In the evenings it's more in the mornings it's less. If I notice any discrepancies in those numbers I'll just run a 'killall -HUP networkmap' and it fixes it. I'm not running ipv6 though. I saw some fixed issues around ipv6 earlier in the thread with restarting some services via cron. You may want to look at those.

Similar threads

- Replies

- 106

- Views

- 21K

- Replies

- 150

- Views

- 19K

- Replies

- 6

- Views

- 476

- Replies

- 1

- Views

- 206

- Replies

- 268

- Views

- 51K

Similar threads

Similar threads

-

-

-

-

Does minidlna get installed by default (asuswrt-merlin 3006.102.5)

- Started by chrisisbd

- Replies: 2

-

-

-

-

-

Homekit help request on Asuswrt-Merlin 3006.102.4 Beta 2

- Started by Alfie J

- Replies: 2

-

Latest threads

-

-

-

Release ASUS GT-AX6000 Firmware version 3.0.0.6.102_37421 (2026/03/23)

- Started by Erwin

- Replies: 4

-

-

Support SNBForums w/ Amazon

If you'd like to support SNBForums, just use this link and buy anything on Amazon. Thanks!

Sign Up For SNBForums Daily Digest

Get an update of what's new every day delivered to your mailbox. Sign up here!

Members online

Total: 6,970 (members: 13, guests: 6,957)